GRACE:

Gravity Recovery And Climate Experiment

An Earth System Science Pathfinder (ESSP) Mission

Byron D. Tapley

(Principal Investigator)

Center for Space Research

The University of Texas at Austin

Chris Reigber

(Co-Principal Investigator)

GeoForschungsZentrum

Potsdam

|

|

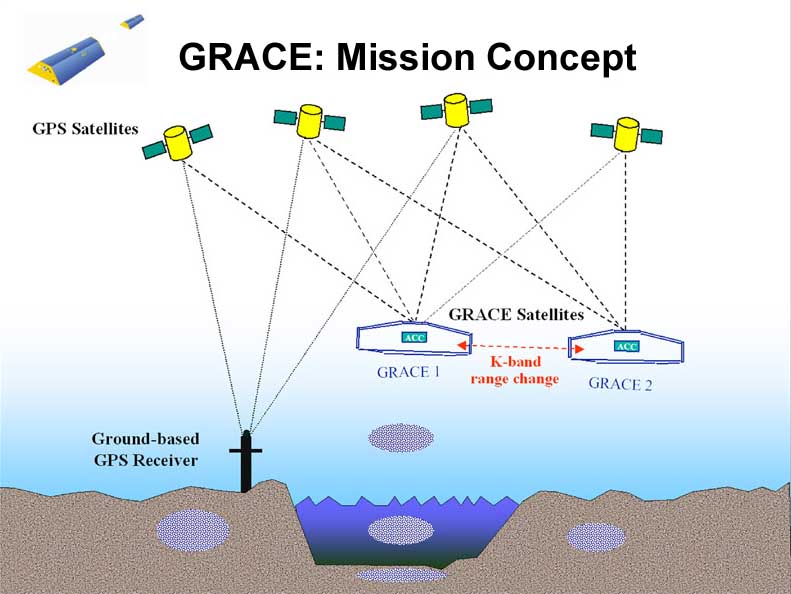

GRACE Mission

Science Goals

Science Goals

High resolution, mean & time variable gravity field mapping for Earth System Science applications

Mission Systems

Instruments

- HAIRS (JPL/SSL/APL)

- SuperSTAR (ONERA)

- Star Cameras (DTU)

- GPS Receiver (JPL)

Satellite (JPL/DSS)

Launcher (DLR/Eurokot)

Operations (DLR/GSOC)

Science (CSR/JPL/GFZ)

Orbit

Launch: February 2002

Altitude: 485 km

Inclination: 89 deg

Eccentricity: ~0.001

Lifetime: 5 years

Non-Repeat Ground Track, Earth Pointed, 3-Axis Stable

COMPUTATIONAL CHALLENGE

Up to 180x180 spherical harmonics (~32,400 parameters) n

- 180x180 solution may be required to accurately smooth to 160x160

Daily computational task

- Numerical integration of ~194,000 dif. eq (6n)

- Process ~17,280 SST observations (m)

- Calculate and write 4.5 Gbytes of partial derivatives

- Accumulate partials (m*n*n ~18 trillion FLOPs)

- Comparable requirements for GPS data

Requires > 8 Gbytes of machine memory for accumulation, solution, and covariance (n*n)

- Solution covariance alone will be 4.2 Gbytes (n*n/2)

COMPUTATIONAL REQUIREMENTS

HPC Resources Used

- TACC T3Es, NASA T3E and ORIGIN 2000, CSR/TACC Cray SV-1

- TACC SGI/STK Data Archive

Computational rate (for 180x180 solution)

- Each day's processing requires 30-60 CPU hours (@200 Mflops)

- Must be able to process several days worth of data each day

Storage requirements (for 180x180 solution)

- ~8 Gbytes or partials each day (shprt term storage)

- 400-800 Gbytes of information equations and covariances each year

If computational load shared between CSR and remote computers, ~8 Gbytes/day must move across internet

On order of 100,000 CPU hours per year

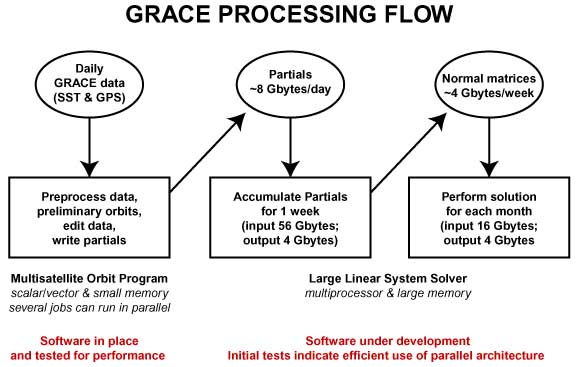

COMPUTATION STRATEGIES

Generate daily data equations concurrently on current vector platform (essentially parallel/vector)

- Edit data & integrate satellite trajectories

- Calculate and write partials (~8 Gbytes/day)

Accumulate and solve information equations on available parallel platforms

- Already demonstrated to be highly parallel process

- Using computing resources elsewhere requires moving hunmdreds of Gbytes of data across internet

DEMONSTRATED PERFORMANCE

Working with PLAPACK developers to enhance parallel performance

Using 32 T3E nodes, calculate soultion and covariance for 110x110

- 334 Mflops/node (50% peak), 11 Gflops total, 7 min wallcloack

Using 512 T3E nodes, calculate solution and covariance for 200x200

- 183 Mflops/node (30% peak), 94 Gflops effective total speed

Using a single SV-1 MultiStreaming Processor, nearly 1 Gflop attained for 120x120

data accumulation (MSP has 4.8 Gflop theoretical peak)

- Actual performance significantly affected by overall load on machine due to memory bandwidth limitations

|